On Human to Robot Motion Mapping for the Execution of Complex Tactile American Sign Language Tasks with a Humanoid Robot

On Human to Robot Motion Mapping for the Execution of Complex Tactile American Sign Language Tasks with a Humanoid Robot

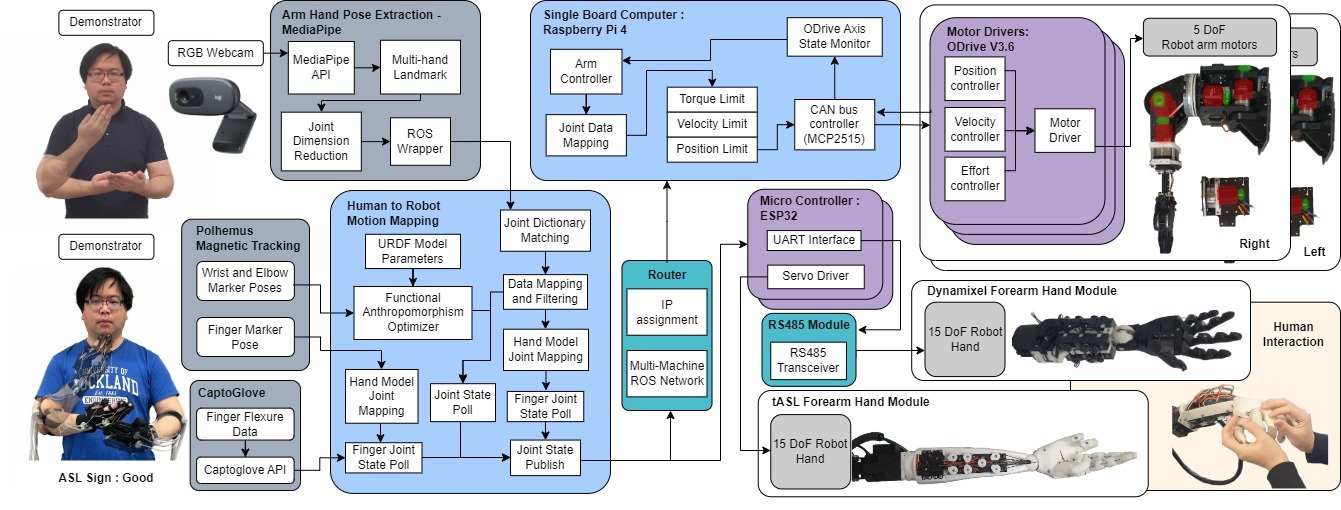

An estimated 0.2% of the world population is living with severe deafblindness, with approximately 1.5 million Americans using tactile American Sign Language (t-ASL) as their primary form of communication. To allow them to communicate without an in-person interpreter, a bimanual robotic manipulation platform has been employed to mimic human motions and execute complex bimanual t-ASL signs. In particular, a human-to-robot motion mapping framework for the employed platform has been developed so as to allow for the extraction of signing trajectories using motion capture data from a human demonstrator. The collected data has also been used to generate preprogrammed poses for text prompt playbacks on the humanoid robot system. Using the functional anthropomorphism approach, the human-to-robot motion mapping problem has been formulated as a constrained optimisation problem that maps the human trajectories to corresponding robot trajectories that are as human-like as possible. The efficiency of the proposed system has been experimentally validated through: i) the execution of complex, bimanual tASL signs and ii) humans with simulated deafblindness interpreting the executed tASL signs.

OpenBMP Page:

https://newdexterity.org/openbmp